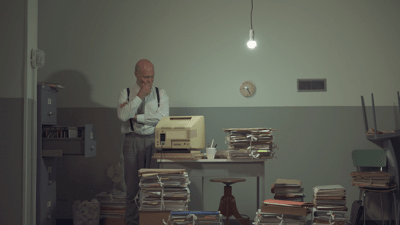

We wrote earlier that there is nothing wrong with managing databases in a somewhat older environment. But there is also nothing wrong with more mature database administrators. Thomas Spoelstra - yes, the guy from the man cave - is one of those. He knows better than anyone how to set up a database as efficiently as possible, because 'before' there simply wasn't any more disk space or memory. Now that we are massively switching to the cloud, with all its space and possibilities for scaling up, those efficiency skills no longer seem that important. Until the costs get out of hand. Then you need someone who can assess and fine-tune the content of the data model to reduce your cloud costs. So someone like Thomas...

Last century

You can call me an old DBA. I took my first steps into the wonderful world of databases in the last century. In 1989 to be exact. That wonderful world consisted of DMBS systems that younger colleagues have never heard of, such as INFOS-II and dBase III. Data was often stored in flat files and we were masters in generating keys and optimizing storage.

The key, the whole key and nothing but the key

One day I was introduced to a relational database system, Sybase 10, which runs on another relic: OpenVMS. One limitation I remember to this day was the maximum size of a two gigabyte database. We had to take care of placing different databases on different disks to increase the number of spindles and performance! At that time, serious thought had to be given to the design of the data model. Tables had to be kept compact and the data types for each column had to be carefully selected in order to normalize your data. The third normal form was considered sacred, with the mantra being: each attribute must represent a fact about the key, the whole key, and nothing but the key. You really had to have a seriously good reason not to normalize to this magical third normal form. Tuning and optimizing searches was an essential part of the daily tasks.

Fast forward

By now we are about three decades further on and the world has changed a lot. Hardware is cheaper than ever. In a way, it follows Moore's law, where machines double in power every few years and have hardly become more expensive in comparison. From discrete hard drives as database storage devices, we switched to RAID5 and had to deal with huge storage arrays with hardly any moving parts - apart from the fans. Platters and spindles? Hardly anybody talked about them anymore. Because when the database grows, you just add more storage space. Without blinking your eyes. Is performance deteriorating a bit? Just add a few CPUs with a few gigabytes of RAM. But this entails hidden costs.

Scared

With the speed at which we're embracing the cloud today - and all that goes with it, like DbaaS - I foresee that some traditional DBA skills are slowly becoming less important. Up to a certain point. We've seen this a number of times: companies go to the cloud and add storage and especially hardware as soon as performance issues arise. That happens a couple of times, until someone is shocked by the monthly bill. Then all of a sudden there's a shout: Why do we spend so much on cloud infrastructure for our database? What should we do to reduce our cloud spending?

Database neglect

We have assisted a number of clients with this question. One of the things we keep seeing returning is the lack of maintenance of the database. Rebuilding indexes, reclaiming space, reorganizing tables, are all things that are often neglected. Datatype mismatches in queries is another common phenomenon that contributes to poor performance. But also connections between tables that are not ideal to say the least; we have seen dramatic performance improvements simply by rewriting a query. And these are just a few simple examples.

Clammy hands

As long as DbaaS offers are flooding the market (think of AWS RDS and AWS Aurora, Azure SQL), such performance problems will remain. A few mouse clicks, your credit card number and there, your database is in the cloud. But as your organization grows, that 'small' database of 20 gigabytes will be a terabyte in size before you know it. See that your PostgreSQL database continues to perform, without getting clammy hands.

More limitations

And there are more limitations like that to the DbaaS concept. On a bare-metal PostgreSQL server, you can install an unlimited number of extensions, while a DbaaS instance severely limits you in that. On a bare metal instance you can install an extension and experiment with hypothetical indexes and partitions before taking the step to create the index or partition the table. Partitioning a huge table is no easy task and must be worth the time and effort. Easy to do on your bare-metal, but not supported on the DbaaS instances.

Threatened with extinction

Unfortunately, it looks like the traditional DBA skills are slowly becoming extinct and that is a shame. For who can still assess and fine-tune your data model in order to reduce your cloud costs? Who can still spend time looking at queries and data models and optimize them for maximum performance? Who can still spend time on draft a good maintenance plan for the databases? Who can still advise and coach the developers in best practices? Do you still have them, those old skool DBA skills? Then we are looking for you to join our team.

Is your database fit enough?

Feel free to contact us if you want to know more about the possibilities of a HealthCheck on your environment or if you are worried about the performance of your databases.

Other blogs about this subject: